Hiring partners tell us all the time that they want candidates who are excited about the field of autonomous vehicles. That’s part of what makes the Udacity Self-Driving Car Engineer Nanodegree Program so impressive — students from around the world have sought out the program in order to learn about the field.

In addition to the twelve different projects students must pass to earn the Nanodegree credential, many of our students go even further and build independent projects of their own.

Here are a few projects that different students have undertaken. Maybe they can inspire you to build your own independent project!

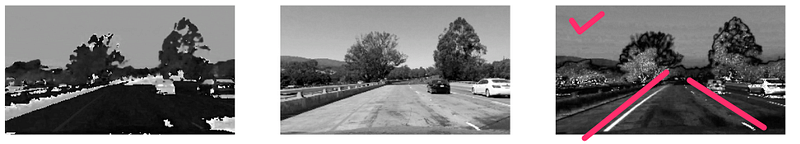

Lane Detection with Deep Learning (Part 1)

Michael is a student in both the Udacity Self-Driving Car Nanodegree Program and also the Udacity Machine Learning Nanodegree Program. For his MLND capstone project, he built a neural network to detect lanes on the road.

This blog post is a two-part series. Part 1 is all about collecting and labeling data, which is a major task in any machine learning project. In case the suspense is killing you, here’s Part 2, in which Michael uses convolutional layer visualization, transfer learning, and finally a segmentation network, to build a lane-finding model.

Building Self-Driving RC Car Series #1 — Equipment & Plan

For anybody who is interested in building their own mini self-driving car, Yazeed has put together a five-part series on how he built his. Part 1: Equipment & Plan. Part 2: Hardware Setup. Part 3: Manual Control Using Raspberry Pi & Python. Part 4: Everything In Place. Part 5: Serverless Control Using Computer Vision 🙂

Building a Bayesian deep learning classifier

Kyle wrote up a deep and detailed blog post about modifying deep neural networks to incorporate uncertainty. Uncertainty is a core component of Bayesian logic, and we use uncertainty is algorithms like Kalman filters, which are crucial for fusing data from multiple sensors. Kyle follows guidance from the machine learning group at Cambridge University to compare differences in softmax activation functions and ultimately develop a confidence measure for classification values.

Build your own self driving (toy) car

Bogdan constructed his own mini self-driving car using the Donkey hardware, but then built his own software stack. He got ROS running on a Raspberry Pi (!!) and trained a behavioral cloning neural network.

HomographyNet: Deep Image Homography Estimation

Mez implemented a paper from the team at Magic Leap for implementing homography with deep learning. Homography is the mapping of two different perspectives onto each other. So if you take a photo of a statue from the north side, and one from the south side, can you tell that it’s the same statue and can you figure out how to generate an image from the east or west side? Magic Leap is a virtual reality company, and you can see why this would be an important skill in virtual worlds.