Enjoy a look at some of the projects our students are building, including Finding Lane Lines, Traffic Sign Classifier, Behavioral Cloning, and more!

Students in our Self-Driving Car Engineer Nanodegree program engage in a project-based curriculum, and from the moment they enroll, they begin addressing key challenges and topics through building specialized projects. Here are all of the projects they build!

Finding Lane Lines

This is the first project students complete, one week into the program.

They learn to work with images, color spaces, thresholds, and gradients, in order to find lane lines on the road.

Stack: Python, NumPy, OpenCV

Traffic Sign Classifier

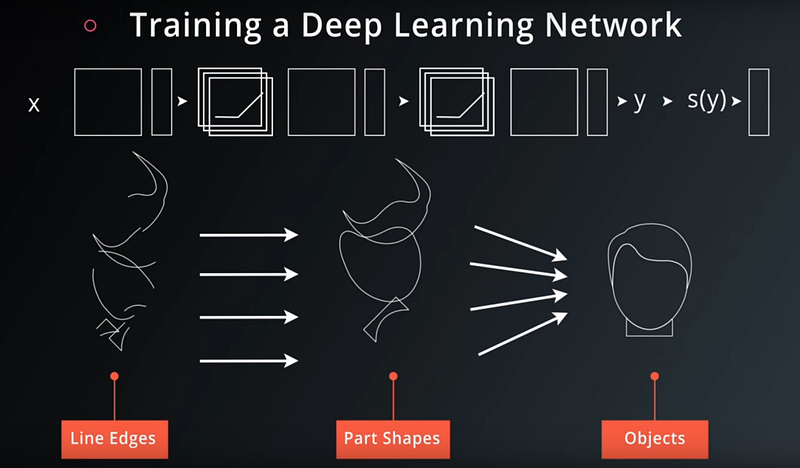

In this project, students train a convolutional neural network to classify traffic signs.

To do so, they use the German Traffic Sign Recognition Benchmark dataset. This particular student went above and beyond to train his network to not only classify signs, but also localize them within the image, and applied his classifier to a video.

Stack: Python, NumPy, TensorFlow

Behavioral Cloning

Here, students record training data by manually driving a car around a track in a simulator.

Then they use this camera, steering, and throttle data to train an end-to-end neural network for driving the vehicle, based on NVIDIA’s famous research paper.

Stack: Python, NumPy, Keras

Advanced Lane Finding

By applying advanced computer vision techniques, such as sliding window tracking, to a dashcam video, students are able to track lane lines on the road under a variety of challenging conditions.

Stack: Python, NumPy, OpenCV

Vehicle Detection and Tracking

Students use machine learning techniques and feature extraction to identify and track vehicles on a highway.

Stack: Python, NumPy, scikit-learn, OpenCV

Extended Kalman Filter

An extended Kalman filter merges noisy simulated radar and lidar data to track a vehicle.

Stack: C++, Eigen

Unscented Kalman Filter

An unscented Kalman filter merges noisy, highly non-linear simulated radar and lidar data to track a vehicle.

Stack: C++, Eigen

Kidnapped Vehicle

Students develop a particle filter in C++ to probabilistically determine a vehicles location relative to a sparse landmark map.

Stack: C++

PID Controller

Students build and tune a proportional-integral-derivative controller to steer a vehicle around a test track, following a target trajectory.

Stack: C++

Model Predictive Control

Students build and optimize a model predictive controller to steer a vehicle around a test track, following a target trajectory.

Stack: C++, ipopt

Path Planning

In this project, students construct a path planner for highway driving based on a finite state machine.

The planner has three components: environmental prediction, maneuver selection, and trajectory generation.

Stack: C++

Semantic Segmentation

Students train a pixel-wise segmentation network that identifies and colors road pixels to identify free space for driving.

Stack: Python, TensorFlow

Safety Case

Students build a prototype of a safety case for a lane-keeping assistance ADAS feature, including the safety plan, hazard analysis and risk assessment, functional safety concept, technical safety concept, and software requirements.

Programming a Real Self-Driving Car

For this project, students form teams to drive a real self-driving car around the Udacity test track.

The car is required to negotiate a traffic light and follow a waypoint trajectory. Code is built first in the simulator, and then deployed to Udacity’s self-driving car in California.

Stack: Python, ROS, Autoware, TensorFlow

Would you like to be building these kinds of projects yourself? Then you should apply to the Udacity Self-Driving Car Engineer Nanodegree Program!