One of the highlights of the Udacity Self-Driving Car Engineer Nanodegree Program is the Behavioral Cloning Project.

In this project, each student uses the Udacity Simulator to drive a car around a track and record training data. Students use the data to train a neural network to drive the car autonomously. This is the same problem that world-class autonomous vehicle engineering teams are working on with real cars!

There are so many ways to tackle this problem. Here are six approaches that different Udacity students took.

Self-Driving Car Engineer Diary — 5

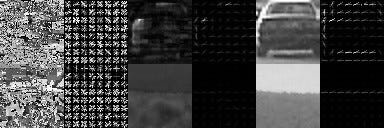

Andrew’s post highlights the differences between the Keras neural network framework and the TensorFlow framework. In particular, Andrew mentions how much he likes Keras:

“We were introduced to Keras and I almost cried tears of joy. This is the official high-level library for TensorFlow and takes much of the pain out of creating neural networks. I quickly added Keras (and Pandas) to my Deep Learning Pipeline.”

Self-Driving Car Simulator — Behavioral Cloning (P3)

Jean-Marc used extensive data augmentation to improve his model’s performance. In particular, he used images from offset cameras to create “synthetic cross-track error”. He built a small model-predictive controller to correct for this and train the model:

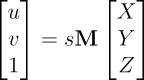

“A synthetic cross-track error is generated by using the images of the left and of the right camera. In the sketch below, s is the steering angle and C and L are the position of the center and left camera respectively. When the image of the left camera is used, it implies that the center of the car is at the position L. In order to recover its position, the car would need to have a steering angle s’ larger than s:

tan(s’) = tan(s) + (LC)/h”

Behavioral Cloning — Transfer Learning with Feature Extraction

Alena used transfer learning to build her end-to-end driving model on the shoulders of a famous neural network called VGG. Her approach worked great. Transfer learning is a really advanced technique and it’s exciting to see Alena succeed with it:

I have chosen VGG16 as a base model for feature extraction. It has good performance and at the same time quite simple. Moreover it has something in common with popular NVidia and comma.ai models. At the same time use of VGG16 means you have to work with color images and minimal image size is 48×48.

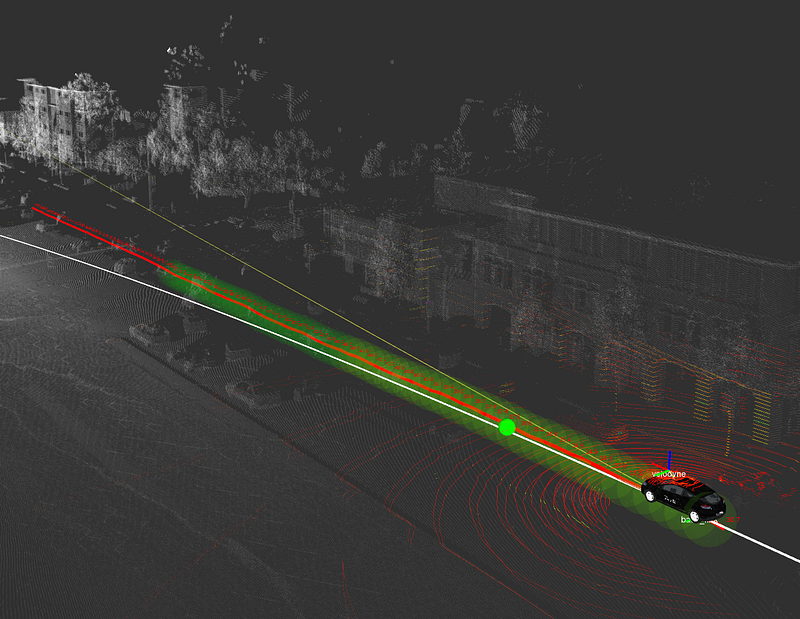

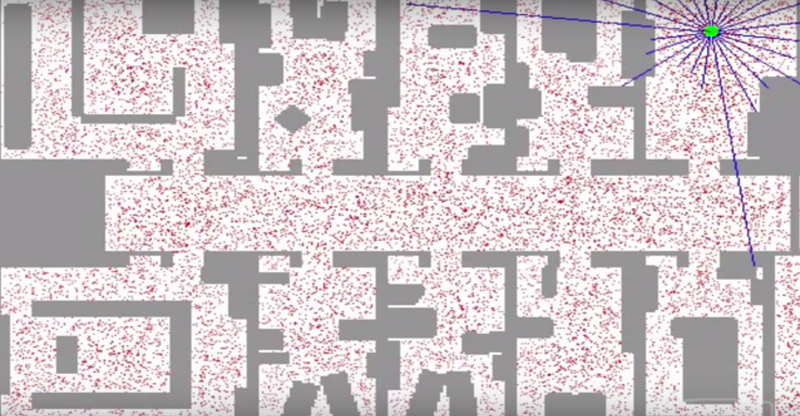

Introduction to Udacity Self-Driving Car Simulator

The Behavioral Cloning Project utilizes the open-source Udacity Self-Driving Car Simulator. In this post, Naoki introduces the simulator and dives into the source code. Follow Naoki’s instructions and build a new track for us!

“If you want to modify the scenes in the simulator, you’ll need to deep dive into the Unity projects and rebuild the project to generate a new executable file.”

MainSqueeze: The 52 parameter model that drives in the Udacity simulator

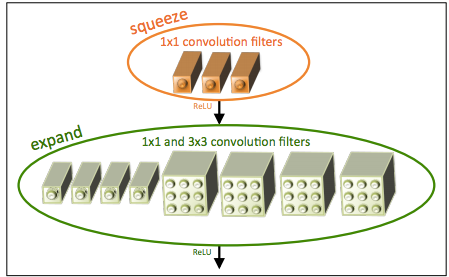

In this post, Mez explains the implementation of SqueezeNet for the Behavioral Cloning Project. This is smallest network I’ve seen yet for this project. Only 52 parameters!

“With a squeeze net you get three additional hyperparameters that are used to generate the fire module:

1: Number of 1×1 kernels to use in the squeeze layer within the fire module

2: Number of 1×1 kernels to use in the expand layer within the fire module

3: Number of 3×3 kernels to use in the expand layer within the fire module”

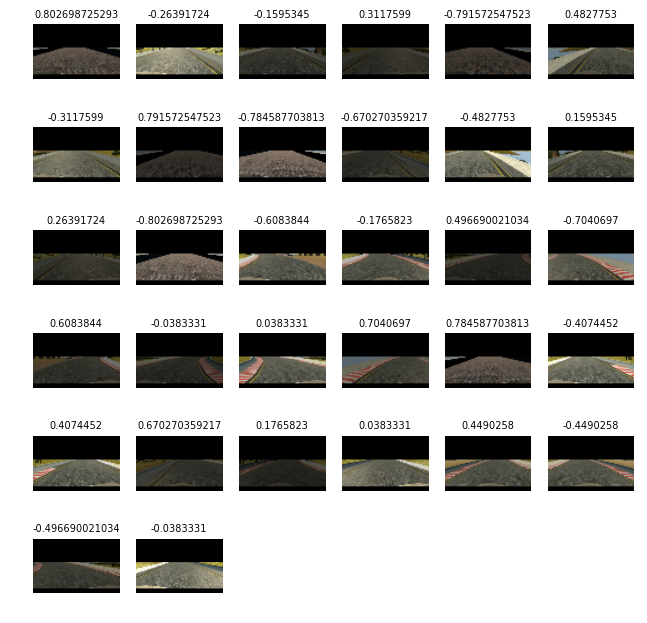

GTA V Behavioral Cloning 2

Renato ported his behavioral cloning network to Grand Theft Auto V. How cool is that?!