Update: Udacity has a new self-driving car curriculum! The post below is now out-of-date, but you can see the new syllabus here.

Last night we offered acceptances to thousands of students who are excited to join Udacity’s Self-Driving Car Nanodegree Program!

We are working hard to make this the world’s best training program for self-driving car engineers. The entire curriculum will consist of three terms over nine months. Here’s what in the program:

Term 1

Introduction

- Meet the instructors — Sebastian Thrun, Ryan Keenan, and myself. Learn about the systems that comprise a self-driving car, and the structure of the program.

- Project: Detect Lane Lines

Detect highway lane lines from a video stream. Use OpenCV image analysis techniques to identify lines, including Hough transforms and Canny edge detection.

Deep Learning

- Machine Learning: Review fundamentals of machine learning, including regression and classification.

- Neural Networks: Learn about perceptrons, activation functions, and basic neural networks. Implement your own neural network in Python.

- Logistic Classifier: Study how to train a logistic classifier, using machine learning. Implement a logistic classifier in TensorFlow.

- Optimization: Investigate techniques for optimizing classifier performance, including validation and test sets, gradient descent, momentum, and learning rates.

- Rectified Linear Units: Evaluate activation functions and how they affect performance.

- Regularization: Learn techniques, including dropout, to avoid overfitting a network to the training data.

- Convolutional Neural Networks: Study the building blocks of convolutional neural networks, including filters, stride, and pooling.

- Project: Traffic Sign Classification

Implement and train a convolutional neural network to classify traffic signs. Use validation sets, pooling, and dropout to choose a network architecture and improve performance. - Keras: Build a multi-layer convolutional network in Keras. Compare the simplicity of Keras to the flexibility of TensorFlow.

- Transfer Learning: Finetune pre-trained networks to solve your own problems. Study cannonical networks such as AlexNet, VGG, GoogLeNet, and ResNet.

- Project: Behavioral Cloning

Architect and train a deep neural network to drive a car in a simulator. Collect your own training data and use it to clone your own driving behavior on a test track.

Computer Vision

- Cameras: Learn the physics of cameras, and how to calibrate, undistort, and transform image perspectives.

- Lane Finding: Study advanced techniques for lane detection with curved roads, adverse weather, and varied lighting.

- Project: Advanced Lane Detection

Detect lane lines in a variety of conditions, including changing road surfaces, curved roads, and variable lighting. Use OpenCV to implement camera calibration and transforms, as well as filters, polynomial fits, and splines. - Support Vector Machines: Implement support vector machines and apply them to image classification.

- Decision Trees: Implement decision trees and apply them to image classification.

- Histogram of Oriented Gradients: Implement histogram of oriented gradients and apply it to image classification.

- Deep Neural Networks: Compare the classification performance of support vector machines, decision trees, histogram of oriented gradients, and deep neural networks.

- Vehicle Tracking: Review how to apply image classification techniques to vehicle tracking, along with basic filters to integrate vehicle position over time.

- Project: Vehicle Tracking

Track vehicles in camera images using image classifiers such as SVMs, decision trees, HOG, and DNNs. Apply filters to fuse position data.

Term 2

Sensor Fusion

Our terms are broken out into modules, which are in turn comprised of a series of focused lessons. This Sensor Fusion module is built with our partners at Mercedes-Benz. The team at Mercedes-Benz is amazing. They are world-class automotive engineers applying autonomous vehicle techniques to some of the finest vehicles in the world. They are also Udacity hiring partners, which means the curriculum we’re developing together is expressly designed to nurture and advance the kind of talent they would like to hire!

Below please find descriptions of each of the lessons that together comprise our Sensor Fusion module:

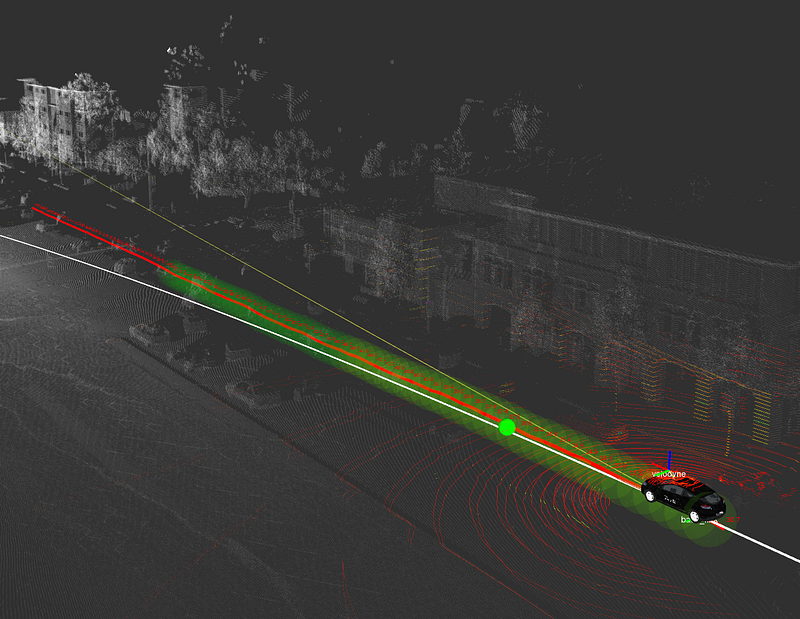

- Sensors

The first lesson of the Sensor Fusion Module covers the physics of two of the most import sensors on an autonomous vehicle — radar and lidar. - Kalman Filters

Kalman filters are the key mathematical tool for fusing together data. Implement these filters in Python to combine measurements from a single sensor over time. - C++ Primer

Review the key C++ concepts for implementing the Term 2 projects. - Project: Extended Kalman Filters in C++

Extended Kalman filters are used by autonomous vehicle engineers to combine measurements from multiple sensors into a non-linear model. Building an EKF is an impressive skill to show an employer. - Unscented Kalman Filter

The Unscented Kalman filter is a mathematically-sophisticated approach for combining sensor data. The UKF performs better than the EKF in many situations. This is the type of project sensor fusion engineers have to build for real self-driving cars. - Project: Pedestrian Tracking

Fuse noisy lidar and radar data together to track a pedestrian.

Localization

This module is also built with our partners at Mercedes-Benz, who employ cutting-edge localization techniques in their own autonomous vehicles. Together we show students how to implement and use foundational algorithms that every localization engineer needs to know.

Here are the lessons in our Localization module:

- Motion

Study how motion and probability affect your belief about where you are in the world. - Markov Localization

Use a Bayesian filter to localize the vehicle in a simplified environment. - Egomotion

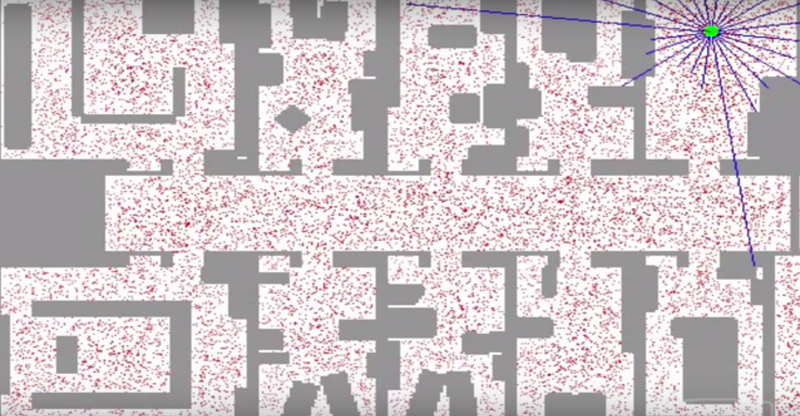

Learn basic models for vehicle movements, including the bicycle model. Estimate the position of the car over time given different sensor data. - Particle Filter

Use a probabilistic sampling technique known as a particle filter to localize the vehicle in a complex environment. - High-Performance Particle Filter

Implement a particle filter in C++. - Project: Kidnapped Vehicle

Implement a particle filter to take real-world data and localize a lost vehicle.

Control

This module is built with our partners at Uber Advanced Technologies Group. Uber is one of the fastest-moving companies in the autonomous vehicle space. They are already testing their self-driving cars in multiple locations in the US, and they’re excited to introduce students to the core control algorithms that autonomous vehicles use. Uber ATG is also a Udacity hiring partner, so pay attention to their lessons if you want to work there!

Here are the lessons:

- Control

Learn how control systems actuate a vehicle to move it on a path. - PID Control

Implement the classic closed-loop controller — a proportional-integral-derivative control system. - Linear Quadratic Regulator

Implement a more sophisticated control algorithm for stabilizing the vehicle in a noisy environment. - Project: Lane-Keeping

Implement a controller to keep a simulated vehicle in its lane. For an extra challenge, use computer vision techniques to identify the lane lines and estimate the cross-track error.

Term 3

Path Planning

Elective

Systems

Term 2 and Term 3 are under construction and we’ll share more details on those as we finalize the curriculum and projects.

[Update: Term 2 and Term 3 are live!]

All of this, including Term 1, is subject to change as we update the curriculum over time, because part of building a great course is taking feedback and making improvements!

If you’ve been accepted into the course, congratulations! We are excited to teach you.

If we suggested you brush up on a few topics and take a self-assessment before joining the course, please do! We are excited to teach you and want to make sure you have a great experience.

And if you haven’t yet applied, please do! We are taking applications for the 2017 cohorts and would love to have you in the class.