Here are some thorough walkthroughs of how to implement lane-finding and end-to-end learning, with all sorts of corner cases.

Want to be like these all stars? Join the Udacity Self-Driving Car Nanodegree Program!

Self-driving Cars — Advanced computer vision with OpenCV, finding lane lines

Ricardo’s lane-finding pipeline works amazing well on challenge video for the Advanced Lane Finding Project. He has an incredibly thorough rundown of his pipeline: calibration, undistortion, color transforms, perspective transform, lane detection, curvature, and unwarping:

“Once you know where the lines are in one frame of video, you can do a highly targeted search for them in the next frame. This is equivalent to using a customized region of interest for each frame of video, which helps to track the lanes through sharp curves and tricky conditions.”

Deep Learning/Gaming Build with NVIDIA Titan Xp and MacBook Pro with Thunderbolt2

I just met Yazeed yesterday at NVIDIA’s GPU Technology Conference, and then I found his blog post today. He loves GPUs and deep learning so much he flew all the way from Saudi Arabia for the conference!

“For me it was a gambling to buy 1200$ Titan Xp which is just relased 17 houres ago — when I bought it — with a promise from NVIDIA to support macOS and I don’t have the Thunderbolt3 port which is the supported port for eGPU. So, I said like Richard Branson said “Screw It, Let’s Do It” and it works like a charm. without further ado, let’s dive in.”

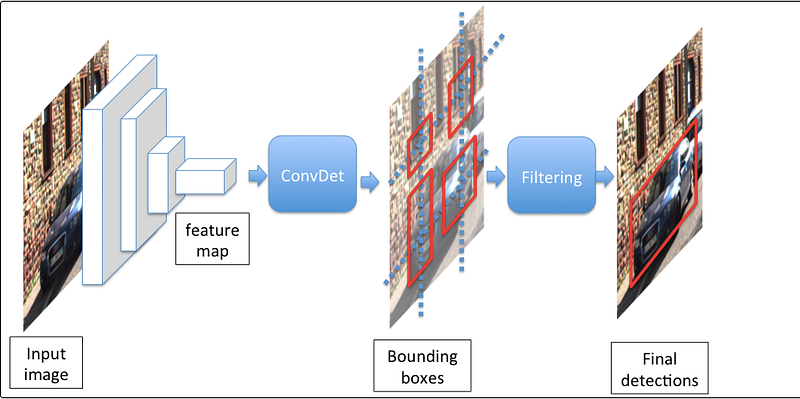

SqueezeDet: Deep Learning for Object Detection

A while back Mez published his results on behavioral coning with SqueezeNet, but he’s back with a super-enthusiastic blog post on his network. Only 52 paramters and 6 second epochs on a CPU!

“One good rule of thumb I developed from this project is to try and reduce the number of variables you are tuning to gain better results faster.”

Behavioral Cloning

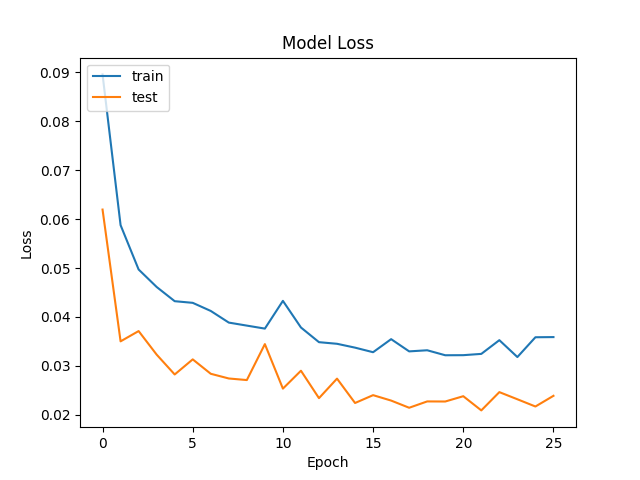

Arsen has a great writeup on using a neural network to calculate both steering and throttle values for the Behavioral Cloning Project. Also, he uses early stopping to prevent overfitting the data.

“The validation set helped determine if the model was over or under fitting. I used EarlyStopping (utils.py line 299) to stop training when validation mse has stopped improving.”

CarND Behavioral Cloning

JC’s writeup of his Behavioral Cloning Project covers a really important topic — how to debug, or at least visualize, what’s going on inside a neural network.

“During the process of training, I felt very uneasy as it is almost like a blackbox. Whenever the model failed to proceed at a certain spot, it is very hard to tell what went wrong. Although my model passed both tracks, the process of try and error and meddle around with different combination of configurations is quite frustrating.”