Tremendous posts this week from Udacity Self-Driving Car Engineer Nanodegree Program students.

If you want to learn what these folks are learning, apply to join us!

My first step towards building a Self Driving Car !!

Sujay has an easy-to-follow recipe for building the first project in the Nanodegree Program — a software pipeline for finding lane lines:

“1. Convert the image to gray scale

2. Apply Gaussian blur to the image to smoothen the image

3. Apply canny edge detection algorithm

4. Apply a filter to remove the unwanted area in the image, like the one above the horizon

5. Apply hough transform and plot the lines that are formed from the points detected in the canny edge step.

6. Based on the slope find the left lines and right lines

7. Find the largest left and right lines.

8. Consider an imaginary horizontal line in the middle of image and another line at the bottom of the image.

9. Find the intersect of largest left and right lines on these imaginary lines using the Cramer’s rule. Plot a line with these intersect point.

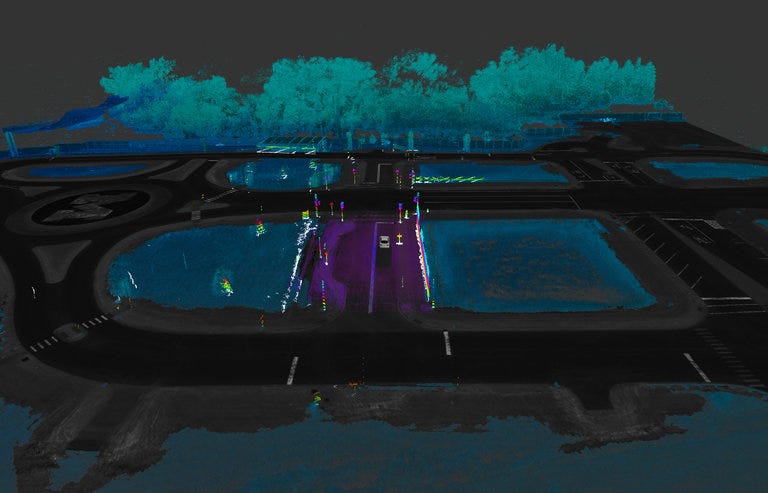

The Grand Prix of Nevada of autonomous vehicles

Arnaldo presents a gripping re-telling of the 2005 DARPA Grand Challenge! If you want the full version, you can watch The Great Robot Race, but for the Cliffs Notes:

And the inevitable happens. The most epic moment in the history of autonomous cars! The first overtaking in the history of autonomous cars in the planet! Stanley overtakes Highlander! Ayrton Senna overtakes Alain Proust (sorry Frenchs, I’m Brazilian)!

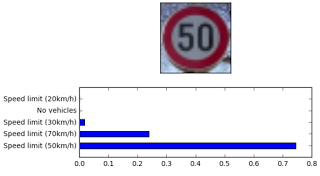

Transfer Learning for Behavioral Cloning

Kosuke provides a great guide for how to use transfer learning for a behavioral cloning problem. Specifically, Kosuke uses VGG16 and applies techniques like batch normalization and parameter reduction:

“The model based on transfer learning could be really sensitive to noise data although it is not validated in any papers. In conclusion, cleaning data made my model much better.”

Detecting Lane Lines in Python with OpenCV

David has a super easy-to-follow post about how to find lane lines on the road, which is the first project in the Udacity Self-Driving Car Engineer Nanodegree Program:

“We just stepped through a practical image processing pipeline using opencv and python! It goes without saying, this is not road ready, but it’s exciting to see how much we can do with a little image manipulating and line filtering logic. What a nice starting point.”

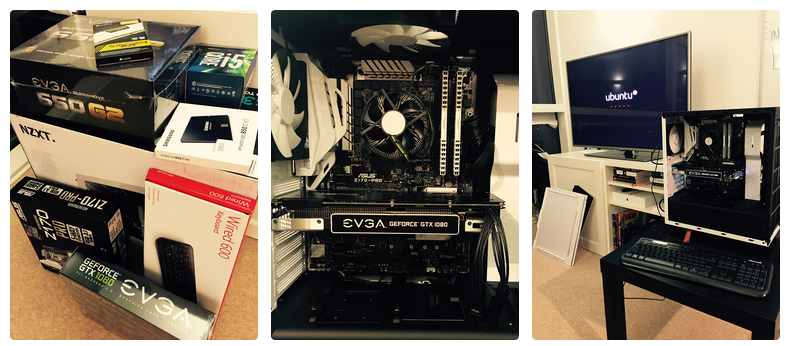

Meet Fenton (my data crunching machine)

Alex’s post goes above and beyond other posts that walk through building your own deep learning machine. He provides an add-on section that describes how to set up the machine as an always-on server, accessible from anywhere:

“Arguably the most important step is picking your machine’s name. I named mine after this famous dog, probably because when making my first steps in data science, whenever my algorithm failed to learn I felt just as desperate and helpless as Fenton’s owner. Fortunately, this happens less and less often these days!”