Gizmodo has a short writeup of a crash in which Tesla Autopilot hit the brakes before the human driver realized what was going on, thereby avoiding a pileup.

Gizmodo calls it clairvoyant. I call it good machine learning.

Gizmodo has a short writeup of a crash in which Tesla Autopilot hit the brakes before the human driver realized what was going on, thereby avoiding a pileup.

Gizmodo calls it clairvoyant. I call it good machine learning.

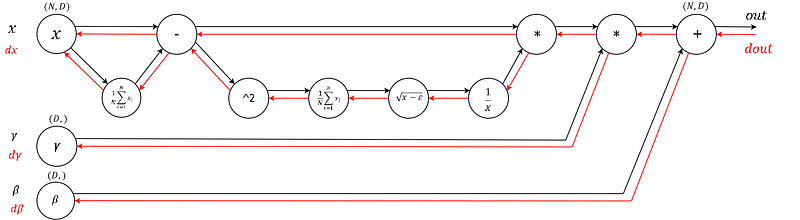

Backpropagation is a leaky abstraction; it is a credit assignment scheme with non-trivial consequences. If you try to ignore how it works under the hood because “TensorFlow automagically makes my networks learn”, you will not be ready to wrestle with the dangers it presents, and you will be much less effective at building and debugging neural networks.

That is from the excellent Andrej Karpathy, “Yes you should understand backprop”.

I say it’s possible to use deep neural networks quite effectively without truly understanding backprop. But if your goal is to specialize in the field and apply this tool to a range of problems, then “yes you should understand backprop”.

By the way, @karpathy is a prolific Twitter feed with 37,100 followers.

In November I gave a talk the Bay Area AI Meetup entitled, “How to Become a Self-Driving Car Engineer”. A fair bit of the talk was an overview of the Udacity Self-Driving Car Engineer Nanodegree Program. But we also touched on a variety of other topics related to autonomous vehicles, particularly during the question and answer session.

The slides for the talk:

The Lane-Finding demo:

The talk itself:

An interview I recorded after the talk with Alexy Khrabrov, the founder of Bay Area AI:

Thanks to the Bay Area AI team for having me!

The Alphabet (Google) self-driving car unit is spinning out as a separate subsidiary within Alphabet, called Waymo.

This is not a surprise, because Google has been telegraphing this move for months.

That said, it’s not obvious what the practical implications of the move are.

As an independent company under the Alphabet umbrella, Waymo will likely be less insulated from scrutiny regarding its progress and performance as a business, so its next steps in terms of partnership and sales or licensing model will be very interesting to watch.

It’s not obvious to me why that would be the case, unless Alphabet starts breaking out Waymo’s financial details in its annual reports. Alphabet hasn’t done that with other business units, though, so it seems unlikely they would do that with Waymo.

Uber has expanded its self-driving taxi trial to the home of technology and autonomous vehicles; San Francisco. Starting from 14 December, Uber customers with a credit card attached to a San Francisco billing address are eligible to ride in a fleet of five self-driving cars.

“Our cars departed for Arizona this morning by truck,” said an Uber spokesperson in an email to The Verge. “We’ll be expanding our self-driving pilot there in the next few weeks, and we’re excited to have the support of Governor Ducey.”

The move comes after California’s Department of Motor Vehicles revoked the registration of Uber’s 16 self-driving cars because the company refused to apply for the appropriate permits for testing autonomous cars.

This does not feel like progress.

A startup called Blackmore just raised a few million dollars to miniaturize sensors for autonomous vehicles.

A few interesting points about Blackmore:

Business Insider recently launched a special series called “Autonomous World” that covers self-driving cars. It’s thorough!

Articles (I have not read all of them yet) include:

One of the questions I get every now and again is whether self-driving cars are a solved problem. Is there any work left to be done in this field?

The answer is that there is so much work left to be done! It only seems like a solved problem from the outside 🙂

So I was interested to read Francois Chollet’s answer to “Is Deep Learning Overhyped?” on Quora.

Chollet is the author of Keras, which is a deep learning library we use in the Udacity Self-Driving Car Program. He explains at length why artificial intelligence generally, much like autonomous driving specifically, is not a solved problem.

Overall: deep learning has made us really good at turning large datasets of perceptual inputs (images, sounds, videos) and simple human-annotated targets (e.g. the list of objects present in a picture) into models that can automatically map the inputs to the targets. That’s great, and it has a ton a transformative practical applications. But it’s still the only thing we can do really well. Let’s not mistake this fairly narrow success in supervised learning for having “solved” machine perception, or machine intelligence in general. The things about intelligence that we don’t understand still massively outnumber the things that we do understand, and while we are standing one step closer to general AI than we did ten years ago, it’s only by a small increment.

There’s still a lot of work left to do!

Udacity’s partner, Auro Robotics, has been testing it’s self-driving shuttle on the campus of Santa Clara University for a year. In November they turned it loose on the public for the first time.

IEEE Spectrum says it’s getting a good reception!

During my rides, it was clear that the students are used to the Aero — so used to it that they don’t even think about getting out of its way. That can lead to a somewhat frustrating ride as the vehicle patiently trails a slow-walking student; it has a horn, but is too polite to beep. Visitors to campus, however, are at first puzzled, then thrilled, to learn that they are being chauffeured in a car that is driving itself. (See video, above.) And if you’re in the area, and have never had a ride in an autonomous vehicle, just stand in front of the parking garage for a while — the shuttle won’t ask to see your ID.

Ars Tecnica has a pretty cool story about Audi demonstrating Vehicle-to-Infrastucture (V2I) communnication in Las Vegas.

Or, really, Infrastructure-to-Vehicle:

Audi’s new Traffic Light Information feature can be found on 2017 A4s, Q7s, and allroad vehicles that have Audi’s Connect Prime package — which puts customers out $10 to $30 monthly, depending on the length of the subscription.

As an Audi driver, you experience this feature as a small icon that tells you how much time is left until the next green light as you come to a stop. If you come to a protected left turn and put your left blinker on, the car will give you a countdown unique to that light as well.

This is pretty basic V2I, but so far V2I has mostly been talk and no action, so it’s awesome to see Audi pushing this live.

It’s also interesting to me that Audi charges a fee for this. That suggests there aren’t really network effects to this effort, otherwise they’d want as many people on the system as possible, even if Audi had to subsidize it.