This afternoon I posted a long response to a question about how we will use C++ vs. Python in the Udacity Self-Driving Car Nanodegree Program, and how automotive engineers use those languages on the job.

You can read my full response, but here’s the part where I focus on how automotive engineers write software on the job:

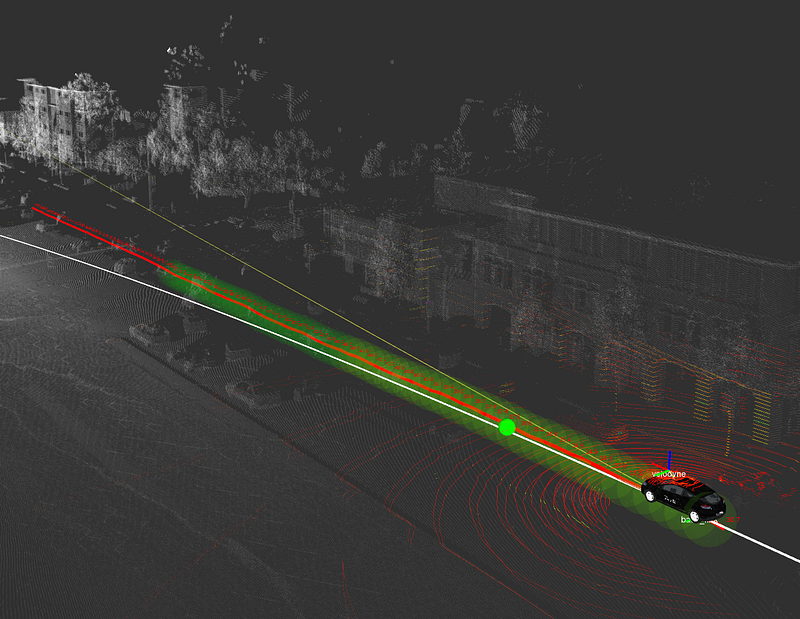

Autonomous vehicle engineers on the job tend to use a variety of languages, depending on their team, their facility with different languages, the APIs their tools expose, and performance requirements.

C++ is a compiled, high-performance language, so most code that actually runs on the vehicle tends to be C++.

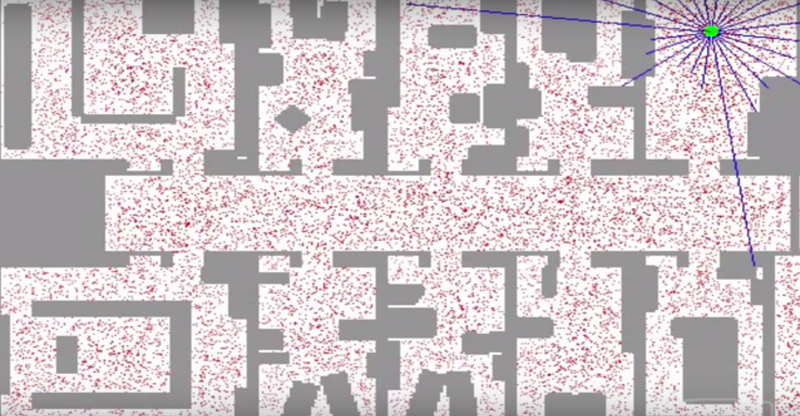

That said, many engineers spend most of their time prototyping algorithms in Python, Matlab, or even Java or other languages. Other engineers spend pretty much all of their time writing production code in C / C++.

Machine learning engineers often spend a lot of time in Python, because libraries like TensorFlow rely on Python for their primary APIs. TensorFlow does a lot of the heavy lifting in terms of compiling networks for faster performance.

Addendum: Since I have been asked several times recently, my favorite C++ book is Modern C++ Programming with Test-Driven Development, by Jeff Langr. Unfortunately, Udacity does not yet have a C++ course. There appear to be C++ courses on Coursera and edX but I have not reviewed them yet.