A few weeks ago, I wrote about the mapping deep-dive that Mobileye CEO Amnon Shashua presented at CES 2021.

That deep dive was one of two that Shashua included in his hour-long presentation. Today I’d like to write about the other deep dive —active sensors.

“Active sensors”, in the context of self-driving cars, typically means radar and lidar. These sensors are “active” in the sense that they emit signals (light pulses and waves) and then record what bounces back. By contract camera (and also audio, where applicable) are “passive” sensors, in that they merely record signals (light waves and sound waves) that already exist in the world.

Shashua pegs Mobileye’s active sensor work to the goal of producing mass-market self-driving cars by 2025. He hedges a bit and doesn’t call this quite “Level 5 autonomy”, but he’s clear that where he’s going.

To penetrate the mass-market, Shashua says Mobileye “wants to do two things: be better and be cheaper.” More specifically, Shashua shares that Mobileye is currently developing two standalone sensor subsystems: camera, and radar plus lidar. Ideally, each of these subsystems could drive the car all by itself.

By 2025, Shashua reveals that Mobileye wants to have three stand-alone subsystems: camera, radar, and lidar. This is the first time I can recall anybody talking serious about driving a car just with radar. If it were possible (that’s a big “if”), it would be a big deal.

Radar

Most of this deep dive is, in fact, about Mobileye’s efforts to dramatically improve radar performance.

“The radar revolution has much further to go and could be a standalone system.”

I don’t fully follow Shashua’s justification this radar effort. He says, “no matter what people tell you about how to reduce the cost of lidar, radar is 10x less expensive.”

Maybe. With the many companies entering the lidar field, a race to the bottom on prices seems plausible. But let’s grant the premise. Even though lidar might be 10x more expensive than radar, Shashua says that Mobileye still plans to build a standalone, lidar-only sensor subsystem. If lidar is so expensive, and radar is so inexpensive, and Mobileye can get radar to perform as well as lidar, then maybe Mobileye should just ditch lidar.

But they’re not ditching lidar, at least not yet.

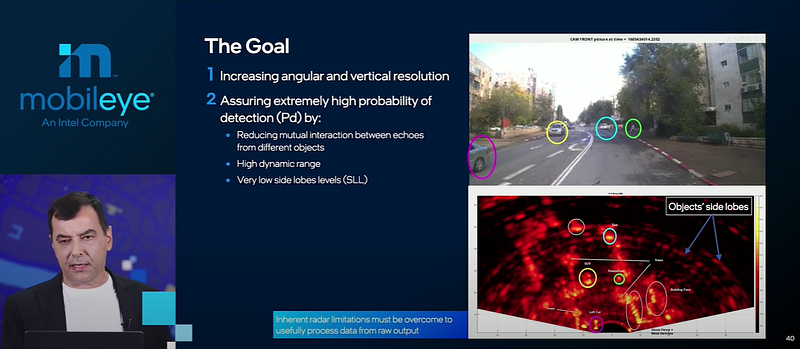

In any case, sensor redundancy is great, and Mobileye is going to make the best radars the world has ever seen. In particular, they are going to focus on two major improvements: increasing resolution, and increasing the probability of detection.

Increasing resolution is a hardware problem. Mobileye is going to improve the current automotive radar state-of-the-art, which is to pack 12×16 trancievers in a sensor unit. Mobileye is working on 48×48 transceivers. Resolution scales exponentially with the number of transceivers, so this would be tremendous.

Increasing the probability of detection is a software problem. Shashua calls this “software-defined imaging by radar.” Unlike with the transceivers, the explanation here is vague. Mobileye is going to transform current radar scans, which result in diffuse “side lobes” around every detected object. Mobileye’s future radar will draw bounding boxes as tight as lidar does.

My best guess as to how they will do this is “mumble, mumble, neural networks.” Mobileye is very good at neural networks.

Lidar

At the end of the deep dive, Shashua spends a few minutes on lidar.

And for that few minutes, the business angles get more interesting than the technology. There’s been a lot of back and forth about Mobileye and Luminar. A few months ago, Luminar announced a big contract from Mobileye, and then shortly after that Mobileye announced the contract would be only short-term. Over the long-term, Mobileye is developing their own lidar.

At CES, Shashua says, “2022, we are all set with Luminar.” But for 2025, they need FMCW (frequency-modulated continuous wave) lidar. That’s what they’re going to build themselves.

FMCW is the same technology that radar uses. The Doppler shift in FMCW allows radar to detect velocity instantaneously (as opposed to camera and lidar, which needs to take at least two different observations, and then infer velocity by measuring the time and distance between those observations).

FMCW lidar will offer the same velocity benefit as FMCW radar. FMCW also uses lower energy signals, and possibly demonstrates better performance in weather like fog and sandstorms, where lidar currently underperforms.

As Shashua himself says in the presentation, the whole lidar industry is going to FMCW. So why does Mobileye need to build their own lidar?

Well, Shashua says, FMCW is hard.

But then we get to the real answer. Intel, which purchased Mobileye several years ago, is going to use Intel fabs to “put active and passive lidar elements on a chip.”

And that’s when I start to wonder if this Luminar deal really is only short-term.

Intel is struggling in a pretty public way, squeezed on different sides by TSMC, NVIDIA, and AMD. In 2021, the Mobileye CEO (and simultaneously Intel SVP) says they’re going to build their own lidar, basically because they’re owned by Intel.

Maybe Intel will turn out to be better and cheaper at lidar production than the five lidar startups that just went public in the past year. Or maybe Intel won’t be better or cheaper, but Mobileye will have to use Intel lidar anyway, because Intel owns them. Or maybe in a few years Mobileye will quietly extend a deal for Luminar FMCW lidar.