Yesterday, I posted a brief overview of a couple of presentations Mobileye CEO Amnon Shashua gave at CES 2021 this month. I really enjoyed these presentations, in large part because over the years I’ve read less about Mobileye and know less about them than many other companies in the automotive technology ecosystem.

Today, I re-watched Shashua’s “deep dive” on Mobileye’s REM mapping approach. It’s quite informative, so I took notes.

- REM is a Mobileye brand name that stands for Road Experience Management

- The maps are generated from cameras. In the future, Mobileye’s lidar and radar will be designed to work with these camera-only maps, not the other way around.

- In particular, even future lidar and radar systems will not use standard, point-cloud-based HD maps. Point clouds take up too much storage space to be practical, particularly for updating from a huge fleet of vehicles.

- Instead of point clouds, REM uses “semantic” maps, that record sparse information, such as driveable paths, stop lines, and traffic signal locations.

- Identifying this semantic segmentation and uploading it to the cloud takes 10 kb of data transfer per kilometer. This costs somebody (the manufacturer?) $1 per year, on average.

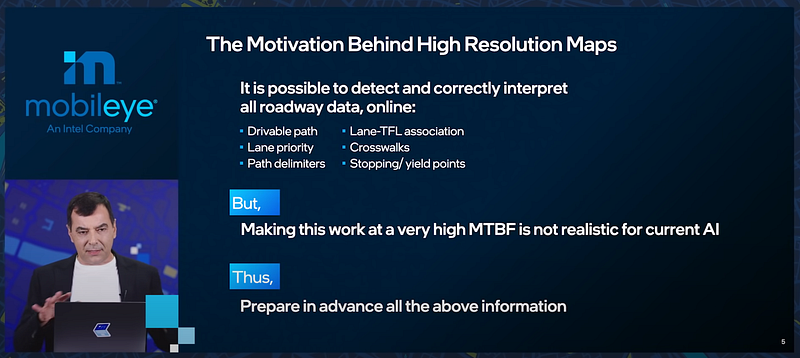

- All of this begs a question, though — are maps even necessary?

- In theory, maps aren’t necessary. After all, humans drive without maps (in many scenarios). Humans just figure out the road as we drive.

- Artificial intelligence can do the same thing, but AI isn’t nearly as good as humans at this (yet). The Mean Time Between Failures (MTBF) for an AI will be low — lots of problems.

- Solution: prepare a lot of this information in advance, and store it in the map.

- Shashua says that everyone is using a map, even if they say they’re not. Pretty clear that this is a reference to Tesla.

- Mobileye’s maps have three performance goals: Scale (consumer vs. robotaxi), Up-To-Dateness (real-time), Accuracy (cm-level)

- Mobileye has a division which builds lidar-based HD Maps, so they know the pros and cons of this approach

- Lidar-based HD maps are too detailed. The AI driver only need information for a 200m radius around the vehicle, but HD maps contain very detailed information about the entire world.

- On the flip side, point clouds are just coordinates in space. AI needs semantic meaning: drivable paths, priority, crosswalks, stopping & yield lines.

- Calculating this in real-time is theoretically possible, but practically impossible: too many conflicting signs and signals, too much noise, too much going on

- Mobileye is now creating AV Map, which are not HD Maps: Scalability everywhere, Accuracy in 200m radius, Semantic features generated from wisdom of crowd

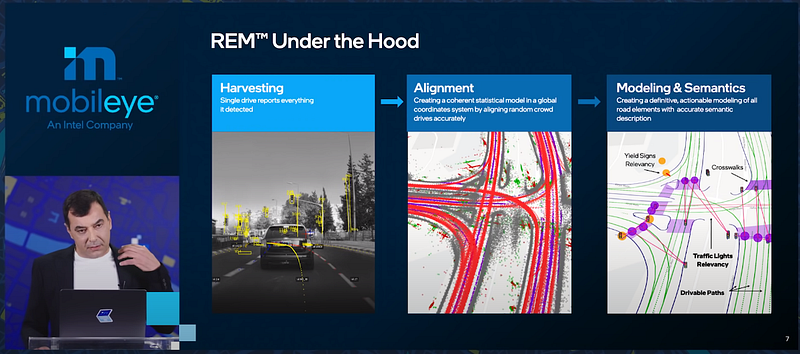

- Map creation process: Harvesting -> Alignment -> Modeling & Semantics

- In the photo above, only data marked by yellow lines in the photo is uploaded to the cloud. That’s the important information.

- Mobileye extracts semantic meaning from the data and uses splines to represent driveable paths.

- Currently, Mobileye maps 8M km of roads every day (6 countries). Unclear if this is 8M unique km, or the same 1km mapped by 8M vehicles every day.

- By 2024, they’ll be mapping 1B km of roads every day (the whole planet).