My Udacity colleague, Cezanne Camacho, is preparing a presentation on capsule networks and gave a draft version in the office today. Cezanne is a terrific engineer and teacher, and she’s already written a great blog post on capsule networks, and she graciously allowed me to share some of that here.

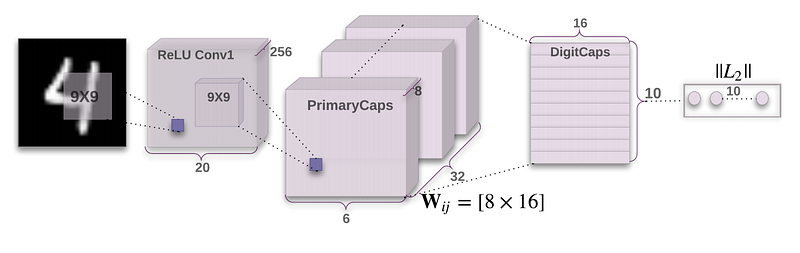

Capsule networks come from a 2017 paper by Sara Sabour, Nicholas Frosst, and Geoffrey Hinton at Google: “Dynamic Routing Between Capsules”. Hinton, in particular, is one of the world’s foremost authorities on neural networks.

As my colleague, Cezanne, writes on her blog:

Capsule Networks provide a way to detect parts of objects in an image and represent spatial relationships between those parts. This means that capsule networks are able to recognize the same object in a variety of different poses even if they have not seen that pose in training data.

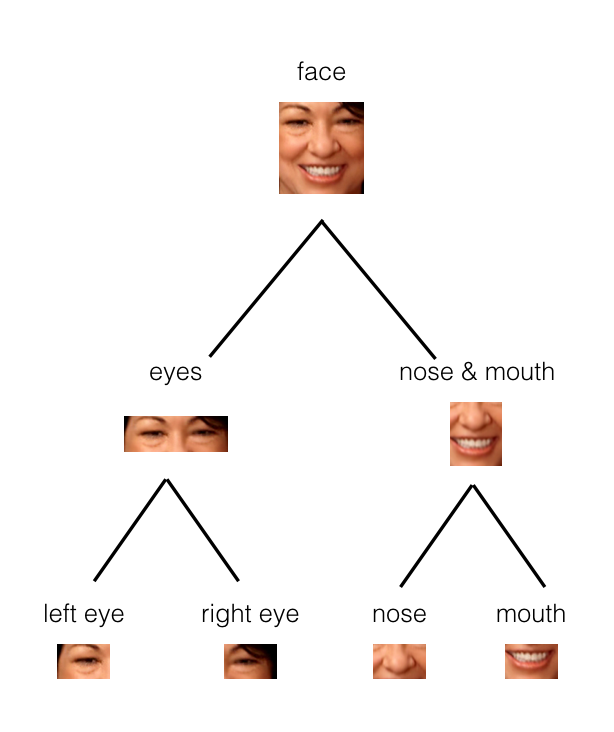

Cezanne explains that a “capsule” encompasses features that make up a piece of an image. Think of an image of a face, for example, and imagine capsules that capture each eye, and the nose, and the mouth.

These capsules organize into a tree structure. Larger structures, like a face, would be parent nodes in the tree, and smaller structures would be child nodes.

“In the example below, you can see how the parts of a face (eyes, nose, mouth, etc.) might be recognized in leaf nodes and then combined to form a more complete face part in parent nodes.”

“Dynamic routing” plays a role in capsule networks:

“Dynamic routing is a process for finding the best connections between the output of one capsule and the inputs of the next layer of capsules. It allows capsules to communicate with each other and determine how data moves through them, according to real-time changes in the network inputs and outputs!”

Dynamic routing is ultimately implemented via an iterative routing process that Cezanne does a really nice job describing, along with the accompanying math, in her blog post.

Capsule networks seem to do well with image classification on a few datasets, but they haven’t been widely deployed yet because they are slow to train.

In case you’d like to play with capsule networks yourself, Cezanne also published a Jupyter notebook with her PyTorch implementation of the Sabour, Frosst, and Hinton paper!