Exactly one year ago, I laid out a number of predictions for the self-driving car world in 2017, along with associated percentages (I lifted the percentages idea from Scott Alexander). Probably I should’ve written this post yesterday, on the last day of 2017, but better late than never.

Here are my predictions from one year ago, scored. Bold font means the prediction was correct. Plain font means the prediction was incorrect.

- 1 Udacity Self-Driving Car Engineer Nanodegree Program student will start a new, permanent, full-time autonomous vehicle job: 99%

- Level 4 self-driving cars will be available for ridesharing on public roads somewhere in the world: 90%

- Level 4 self-driving cars will be available for ridesharing on public roads somewhere in the United States: 90%

- Ford will not push back its 2021 target launch for Level 4 vehicles: 90%

[UPDATE: Ford CEO Jim Hackett has walked this back a little, but officially Ford is still committed to 2021.] - 100 Udacity Self-Driving Car Engineer Nanodegree Program students will start new, permanent, full-time autonomous vehicle jobs: 90%

- No US highway will have a speed limit for autonomous vehicles that is faster than the speed limit for human-driven vehicles: 90%

- 1000 Udacity Self-Driving Car Engineer Nanodegree Program students will start new autonomous vehicle jobs (possibly contract roles or internships): 80%

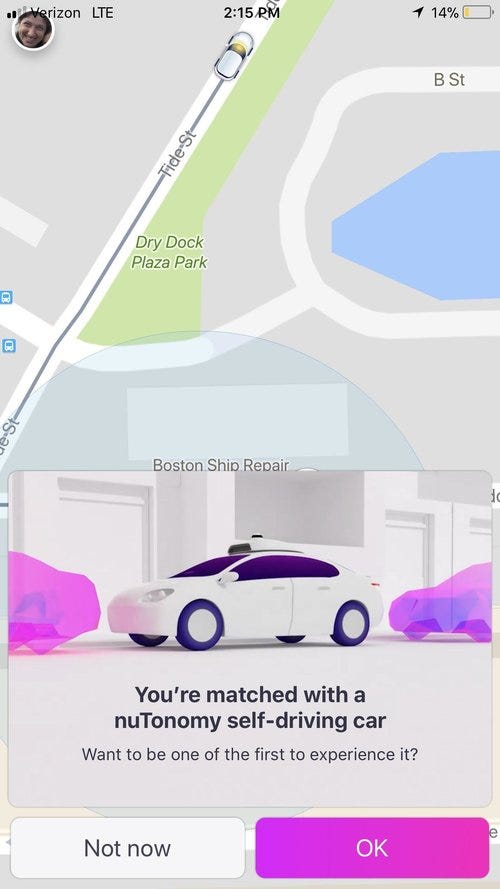

[UPDATE: A year ago I did not appreciate how hard it would be for us to measure this. If you are a student, at some point Udacity will email you a survey about your job search. Please respond! In the meantime, we are looking into more automatic ways to measure students-in-jobs.] - Level 4 self-driving cars will be available for ridesharing on public roads in Singapore: 80%

[UPDATE: As far as I can tell, nuTonomy is continuing to beta test their service in Singapore, with a target public launch of Q2 2018. Although the prediction was not precise, I am not going to count the current service, because, as far as I know, it’s not open to the general public.] - A company will be acquired primarily for its autonomous vehicle capabilities with a valuation above $100 MM USD: 80%

- No company will sell a vehicle with autonomous technology that exceeds what Tesla offers: 80%

[UPDATE: Although Business Insider just ranked GM Super Cruise ahead of Tesla Autopilot, it seems close enough and subjective enough that I score them as essentially tied.] - At least 1 company that has not done so before will complete a continuous US coast-to-coast demonstration trip in a fully autonomous vehicle: 80%

[UPDATE: Torc Robotics.] - Level 4 self-driving cars will be available for ridesharing on public roads in California: 70%

- Conditional on Level 3 self-driving cars being legal somewhere, Tesla will enable Level 3 autonomy in that location: 70%

[UPDATE: Many (most?) US states have not specifically regulated self-driving cars, leading some lawyers to believe that there are no restrictions on self-driving cars in those jurisdictions. So I count this one as wrong, because Level 3 is presumably legal somewhere, but Tesla has not enabled it.] - Google will offer rides to the public in its self-driving cars: 70%

[UPDATE: Same logic as Singapore, above.] - No member of the general public will die in a Level 4 vehicle: 70%

- 2500 Udacity Self-Driving Car Engineer Nanodegree Program students start new autonomous vehicle jobs (possibly contract roles or internships): 60%

- Level 4 self-driving cars will be available for ridesharing on public roads somewhere in Europe: 60%

- Level 4 self-driving cars will not be available for ridesharing on public roads somewhere in China: 60%

- No company will be acquired primarily for its autonomous vehicle capabilities with a valuation above $500 MM USD: 60%

[UDPATE: Mobileye. Oops.] - A new autonomous vehicle startup will form and raise money at a valuation above $75 MM USD: 60%

[UPDATE: Argo AI] - Ford will commit to launching Level 4 vehicles before 2021: 50%

- Level 4 self-driving cars will be available for ridesharing on public roads somewhere in the world, without a safety driver: 50%

[UPDATE: Same logic as Singapore, above.] - Level 3 self-driving cars will be available for private ownership somewhere in the United States: 50%

- Somebody will die in a Tesla Autopilot crash: 50%

- Level 4 autonomous vehicles will be available for public ridesharing when snow is on the ground: 50%

99% confidence: 1 / 1 (100% correct)

90% confidence: 5 / 5 (100% correct)

80% confidence: 3/ 5 (60% correct)

70% confidence: 1 / 4 (25% correct)

60% confidence: 2 / 5 (40% correct)

50% confidence: 0 / 5 (0% correct)

Statistically, based on my confidence assessments, about 18 of the predictions should have been correct. In fact, only 12 were correct. So I was overconfident in my ability to predict the future.

Mostly, I appear to have been too optimistic, although in some cases (i.e. fatalities) I was too pessimistic. A few of my predictions were also not precise enough.

Some important stories from 2017 do not show up in my predictions:

- The Waymo v. Uber lawsuit.

- The success of Cruise Automation in building a Level 4 fleet.

- Tesla’s manufacturing difficulties.

- The 10,000+ students who have enrolled in the Nanodegree Program, many of whom will get jobs in the industry in 2018.

- Voyage spinning out from Udacity.

- The growing importance of lidar.

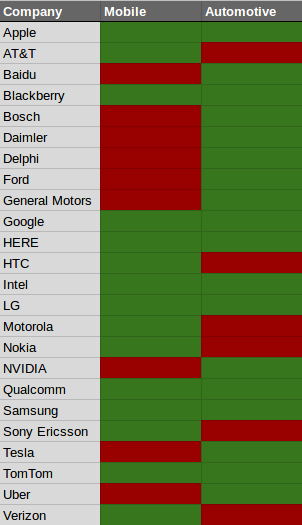

- Investments by Didi, Baidu, and Chinese companies generally.

- NVIDIA’s dominance of the self-driving car chip market.

2018 predictions coming tomorrow.