The story of the day is Kyle Vogt’s announcement that Cruise and GM are ready to begin mass producing self-driving cars.

The post does a nice job of bringing together both Silicon Valley and Detroit.

The story of the day is Kyle Vogt’s announcement that Cruise and GM are ready to begin mass producing self-driving cars.

The post does a nice job of bringing together both Silicon Valley and Detroit.

On the latest episode of the Recode Decode podcast, Kara Swisher interviews Chris Urmson.

Urmson’s Carnegie Mellon team won the DARPA Urban Challenge in 2006. From there he joined my current boss, Sebastian Thrun, at the Google Self-Driving Car Project, back when Sebastian was running that. Urmson led the Google Self-Driving Car Project himself after Sebastian departed to launch Udacity. Most recently, Urmson left Google to found Aurora Innovation, his own self-driving car startup.

The interview is an hour long and Swisher lives up to her reputation as the best of tech journalists. They cover the early days of the DARPA challenges, how Urmson got to Google, why he left Google, what Aurora is doing, how the automotive industry and tech industry will partner and compete, when we will see fully autonomous vehicles on the road, and the moral responsibility autonomous vehicle engineers have to the drivers they might put out of work.

Listen to the whole thing.

https://art19.com/shows/recode-decode/episodes/e6ae4866-7f89-4674-810b-63fa991b7dce

Lyft and Drive.ai will be offering free rides in self-driving cars to riders in the Bay Area who opt in. Soon, supposedly.

Lyft continues to build an impressive list of partnerships: GM, Jaguar Land Rover, nuTonomy.

The Drive.ai partnership sounds especially promising, since its written with the tone that this effort is going to start soon, maybe before the end of the year.

There are self-driving cars being tested on public streets in cities around the world: Pittsburgh, Phoenix, San Francisco, Singapore, Austin. Some of those cities even have self-driving cars testing with a limited set of pre-screened passengers.

But right now, as far as I know, there is only one city where any civilian off the street can show up and hail a self-driving vehicle. That would be Pittsburgh, where Uber has opened their fleet to public use (with safety drivers).

Drive.ai testing in the Bay Area would bring that number to two.

The Safely Ensuring Lives Future Deployment and Research In Vehicle Evolution (SELF DRIVE) Act just passed the House of Representatives unanimously. Congress loves a good backronym.

Wired has a nice rundown of the key parts of the bill. The major feature seems to be moving autonomous vehicle regulation from a patchwork of 50 different state regimes over to a single set of federal regulations. You’ll no longer have to worry about getting arrested when you take your self-driving car across state lines.

The law was pushed by a consortium of interested companies — Ford, Waymo, Lyft, Uber, and Volvo. To be honest, on self-driving cars, I trust my old employers at Ford more than I trust any department of motor vehicles.

The “unanimous” passage of the bill makes me a little nervous — as if not enough people were paying attention. Surely there is something in there somebody objects to. Personally, I kind of like the “laboratories of democracy” aspect of state regulation.

But since it seems destined to become law anyway, we should all hope this bill speeds the development of safe autonomous vehicles that prevent many of the 35,000 annual motor vehicle fatalities in the US.

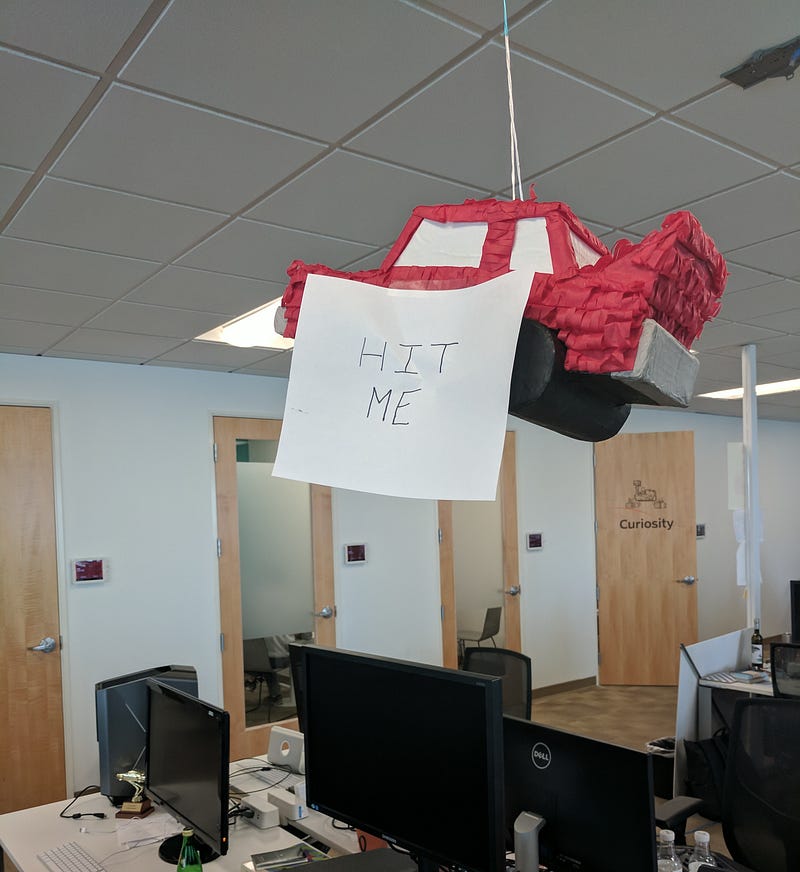

At Udacity, where I work, we have a self-driving car. Her name is Carla.

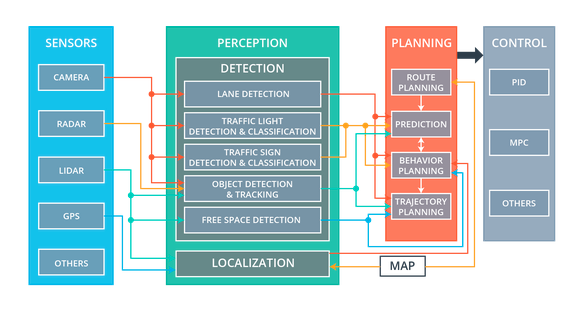

Carla’s technology is divided into four subsystems: sensors, perception, planning, and control.

Carla’s sensor subsystem encompasses the physical hardware that gathers data about the environment.

For example, Carla has cameras mounted behind the top of the windshield. There are usually between one and three cameras lined up in a row, although we can add or remove cameras as our needs change.

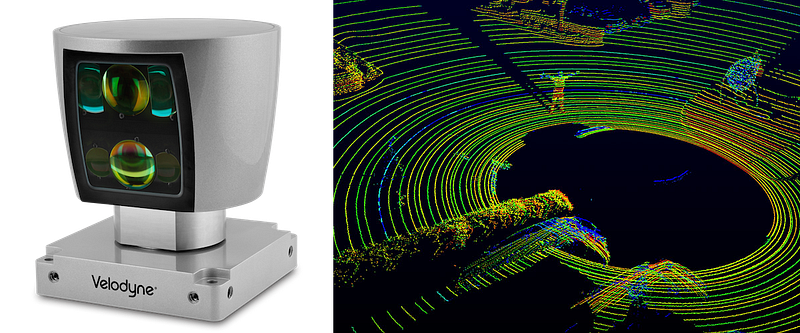

Carla also has a single front-facing radar, embedded in the bumper, and one 360-degree lidar, mounted on the roof.

Sometimes Carla will utilize other sensors, too, like GPS, IMU, and ultrasonic sensors.

Data from these sensors flows into various components of the perception subsystem.

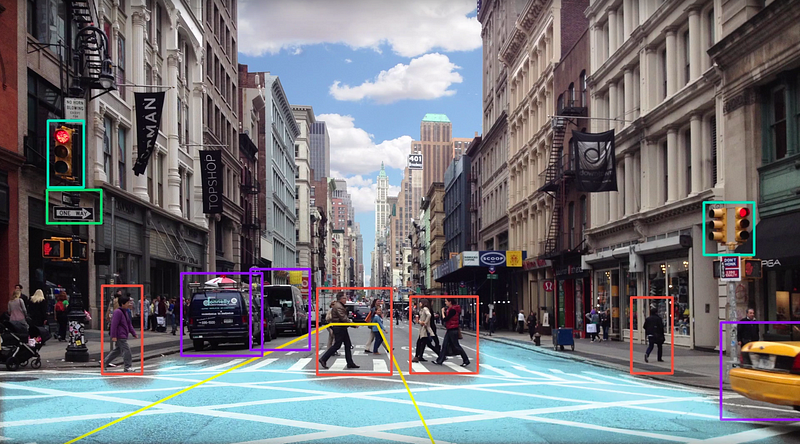

Carla’s perception subsystem translates raw sensor data into meaningful intelligence about her environment. The components of the perception subsystem can be grouped into two blocks: detection and localization.

The detection block uses sensor information to detect objects outside the vehicle. These detection components include traffic light detection and classification, object detection and tracking, and free space detection.

The localization block determines where the vehicle is in the world. This is harder than it sounds. GPS can help, but GPS is only accurate to within 1–2 meters. For a car, a 1–2 meter error range is unacceptably large. A car that thinks it’s in the center of a lane could be off by 1–2 meters and really be on the sidewalk, running into things. We need to do a lot better than the 1–2 meters of accuracy that GPS provides.

Fortunately, we can localize Carla to within 10 centimeters or less, using a combination of high-definition maps, Carla’s own lidar sensor, and sophisticated mathematical algorithms. Carla’s lidar scans the environment, compares what it sees to a high-definition map, and then determines a precise location.

The components of the perception subsystem route their output to the planning subsystem.

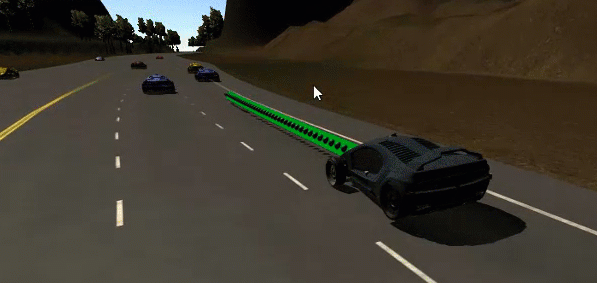

Carla has a straightforward planning subsystem. The planner builds a series of waypoints for Carla to follow. These waypoints are just spots on the road that Carla needs to drive over.

Each waypoint has a specific location and associated target velocity that Carla should match when she passes through that waypoint.

Carla’s planner uses the perception data to predict the movements of other vehicles on the road and update the waypoints accordingly.

For example, if the planning subsystem were to predict that the vehicle in front of Carla would be slowing down, then Carla’s own planner would likely decide to decelerate.

The final step in the planning process would be for the trajectory generation component to build new waypoints that have slower target velocities, since in this example Carla would be slowing down as she passes through the upcoming waypoints.

Similar calculations affect how the planning subsystem treats traffic lights and traffic signs.

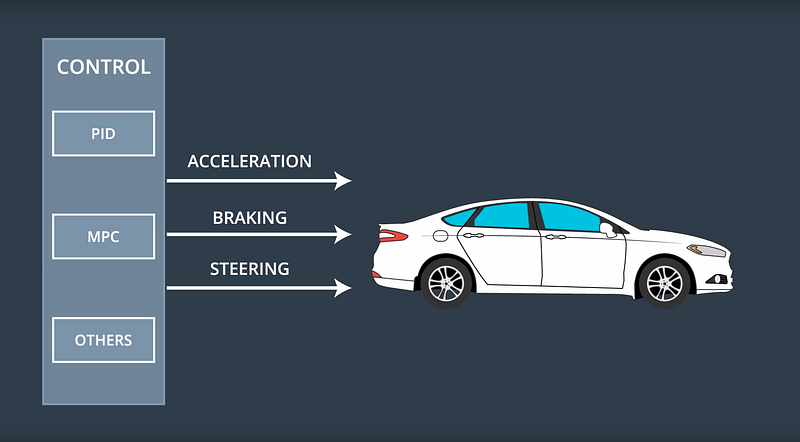

Once the planner has generated a trajectory of new waypoints, this trajectory is passed to the final subsystem, the control subsystem.

The control subsystem actuates the vehicle by sending acceleration, brake, and steering messages. Some of these messages are purely electronic, and others have a physical manifestation. For example, if you ride in Carla, you will actually see the steering wheel turn itself.

The control subsystem takes as input the list of waypoints and target velocities generated by the planning subsystem. Then the control subsystem passes these waypoints and velocities to an algorithm, which calculates just how much to steer, accelerate, or brake, in order to hit the target trajectory.

There are many different algorithms that the control subsystem can use to map waypoints to steering and throttle commands. These different algorithms are called, appropriately enough, controllers.

Carla uses a fairly simple proportional-integral-derivative (PID) controller, but more sophisticated controllers are possible.

That’s how Carla works!

First, the sensor subsystem collects data from Carla’s cameras, radar, and lidar. The perception subsystem uses that sensor data to detect objects in the world and localize the vehicle within its environment. Next, the planning subsystem uses that environmental data to build a trajectory for Carla to follow. Finally, the control system turns the steering wheel and fires the accelerator and brake in order to move the car along that trajectory.

We’re very proud of Carla. She’s driven from Mountain View to San Francisco, and done lots of driving on our test track.

The most exciting thing about Carla is that every Udacity student gets to load their code onto her computer at the end of the Nanodegree Program and see how well she drives on our test track.

If you’re interested in learning about how to program self-driving cars, and you want to try out driving Carla yourself, you should sign up for the Udacity Self-Driving Car Engineer Nanodegree Program!

There are now 39 companies on the list of authorized testers of autonomous vehicles in California.

The list, which I believe is in chronological order, starts with big automotive companies that everybody recognizes, and then includes many smaller, anonymous startups toward the end. Udacity is pretty much in the middle 🙂

An interesting exercise would be to dive into this list and figure out where each company is with their self-driving car development efforts.

Today is my 36th birthday, which officially pushes me into middle age on those surveys that ask if you are 25 or younger, 26–35, 36–45, and so on. That’s a little rough, but otherwise this has been a great year, professionally and personally.

12 months ago, on my 35th birthday, I was leading a small team that was hustling to put together enough of a self-driving car curriculum to release before the holidays.

12 months later, today, our small team has grown and turned over, and we’ve released a nine-month program that has helped thousands of students prepare for roles working on autonomous vehicles. Our first students will graduate in the coming months, and we’ve already seen many students land jobs in the field, even before graduation.

I hope in another twelve months I can circle back and say that this coming year has been even better.

The Autonomous Vehicle field is full of amazing personalities — people who possess remarkable technical skills and rarefied knowledge, but who are also supremely creative, and incredibly passionate.

The team from Mercedes-Benz is a perfect example.

They’re one of our core partners for our Self-Driving Car Engineer Nanodegree program, and they’ve done a remarkable job of not just teaching our students technical skills, but giving them with a real sense of purpose and vision. Perhaps most importantly, they have helped to make complex autonomous vehicle concepts accessible to every single student we teach.

I feel fortunate to work with such great people, and I’d like introduce you to some of them right now! Specifically, Axel, Michael, Dominik, Andrei, Maximillian, Tiffany, Tobi, Mahni, Beni, and Emmanuel!

First, meet Axel. In this video, he shares the history of Mercedes-Benz and autonomous vehicle research, and also describes the type of engineers they are hiring today:

In this next video, Dominik, Michael, and Andrei outline the tools the Mercedes-Benz sensor fusion team uses to combine sensor data for tracking objects in the environment:

Next, Maximillian and Tiffany talk about the work they do on the localization team to help the vehicle determine where it is in the world:

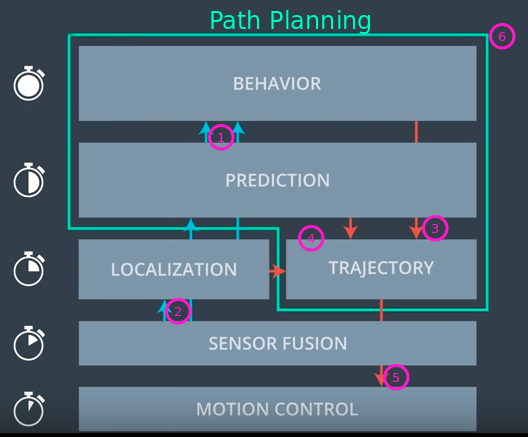

Finally, in this video, Tobi, Mahni, Beni, and Emmanuel outline the three phases of path planning. First, the prediction team estimates what the environment will look like in the future. Then, the behavior planning team decides which maneuver to take next, based on those predictions. Lastly, the trajectory generation team builds a safe and comfortable trajectory to execute that maneuver:

Amazing people, right? Are you ready to join them? Then you should apply to join them at Mercedes-Benz, because they’re hiring!

Not quite ready yet? Then apply now for our Self-Driving Car Engineer Nanodegree program! You’ll be joining the next generation of autonomous vehicle experts, and that’s a pretty amazing thing.

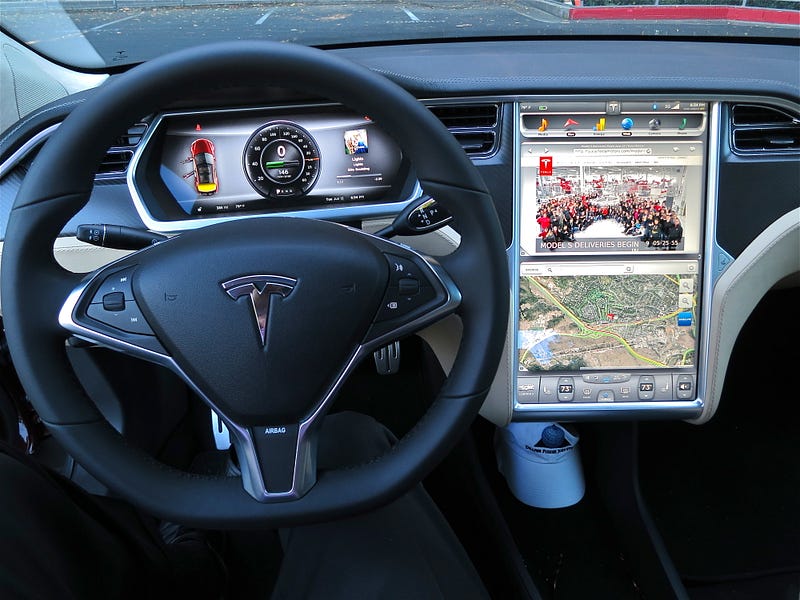

The Wall Street Journal, a publication I read daily and generally quite like, has a recent feature on the drama behind Tesla Autopilot that seems to me a bit unfair.

Indeed, the piece actually quotes Elon Musk saying the same thing:

“In an email, Mr. Musk said he was unhappy with previous Journal articles on the company. “While it is possible that this article could be an exception, that is extremely unlikely, which is why I declined to comment,” he wrote.”

The article dives deep into the internal strife at Tesla over how far and how fast to push Autopilot, Tesla’s suite of advanced driver assistance technologies.

The tone of the piece is that Musk pushed his engineers to release Autopilot beyond its safe capabilities, and as a result many of them objected and ultimately quit.

“Behind the scenes, the Autopilot team has clashed over deadlines and design and marketing decisions, according to more than a dozen people who worked on the project and documents reviewed by The Wall Street Journal. In recent months, the team has lost at least 10 engineers and four top managers — including Mr. Anderson’s [DS: Sterling Anderson was the Director of Autopilot] successor, who lasted less than six months before leaving in June.”

Despite all of the buildup, however, The Journal ultimately fails to make the case that Autopilot was released too aggressively or that it is unsafe.

Both named and unnamed sources are quoted from as early as 2015, stating that Autopilot isn’t ready for hands-free mode and that Musk pushed a product onto the public that wasn’t safe or ready.

And, to be sure, I favor a management style in which the people doing the work get to make the decisions, instead of Musk’s style, which seems to be to dictate decisions for employees to execute.

But Elon Musk has done pretty well for himself and for Tesla, and The Journal isn’t able to dig up any scandals since 2015, except for the one well-known Autopilot crash in Florida.

Tesla Autopilot may be inherently unsafe, and maybe Musk’s push to release it was reckless. Just because nothing’s gone terribly wrong yet doesn’t mean Musk made the right decision. Maybe Tesla’s just been lucky.

But if a newspaper is going to write a hit piece on a technology product, implying that it’s unsafe, it needs to bring more evidence to the table than uncomfortable quotes from engineers who quit.

Term 3 of the Udacity Self-Driving Car Engineer Nanodegree Program starts with path planning. This is one of the deepest and hardest problems for a self-driving car.

Here are three Udacity student approaches that show the complexity and beauty of path planning.

Mithi published a three-part series about what she calls “the most difficult project yet” of the Nanodegree Program. In Part 1, she outlines the goals and constraints of the project, and decides on how to approach the solution. Part 2 covers the architecture of the solution, including the classes Mithi developed and the math for trajectory generation. Part 3 covers implementation, behavior planning, cost functions, and some extra considerations that could be added to improve the planner. This is a great series to review if you’re just starting the project.

“I decided that I should start with a simple model with many simple assumptions and work from there. If the assumption does not work then I will then make my model more complex. I should keep it simple (stupid!).

A programmer should not add functionality until deemed necessary. Always implement things when you actually need them, never when you just foresee that you need them. A famous programmer said that somewhere.

My design principle is, make everything simple if you can get away with it.”

Mohan takes a different approach to path planning, in which he combines a cost function with a feasibility checklist. He builds a cost function and then ranks each lane by how it does on a cost function. Then he decides whether to move to a lane based on the feasibility checklist.

“This comes down to two things (and I’m going to be specific to highway scenario).

Estimating a score for each lane, to determine the best lane for us to be in (efficiency)

Evaluating the feasibility of moving to that lane in the immediate future (safety & comfort)”

The 11th post in Andrew’s series on the Nanodegree Program covers Term 3 broadly and path planning specifically. In particular, Andrew lays out where this path planning project falls in the taxonomy of autonomous driving, and the high-level inputs and outputs of a path planner. This is a great post to review if you’re interested in what a path planner does.

“I found the path planning project challenging, in large part due to fact that we are implementing SAE Level 4 functionality in C++ and the complexity that comes with the interactions required between the various modules.”

These examples make clear the vision, skill, and tenacity our students are applying to even the most difficult challenges, and it’s a real pleasure to share their incredible work. It won’t be long before these talented individuals graduate the program, and begin making significant, real-world contributions to the future of self-driving cars. I know I speak for everyone at Udacity when I say that I’m very excited for the future they’re going to help build!