University of Michigan researchers just released an exciting but vague (that’s the second time I’ve used that formulation recently) whitepaper on testing and validation for autonomous vehicles.

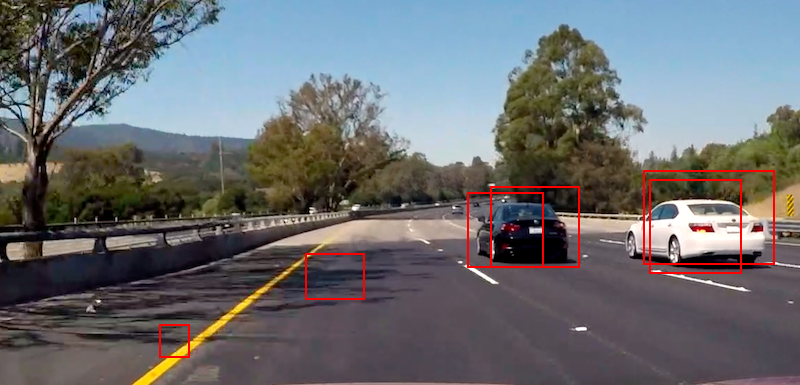

Testing is one of many open challenges in autonomous vehicle development. There’s no clear consensus on exactly how much testing needs to be done, and how to do it, and how safe is safe enough.

Last year, RAND issues a report estimating that it would be basically impossible to empirically verify the safety of self-driving cars on any reasonable timeframe.

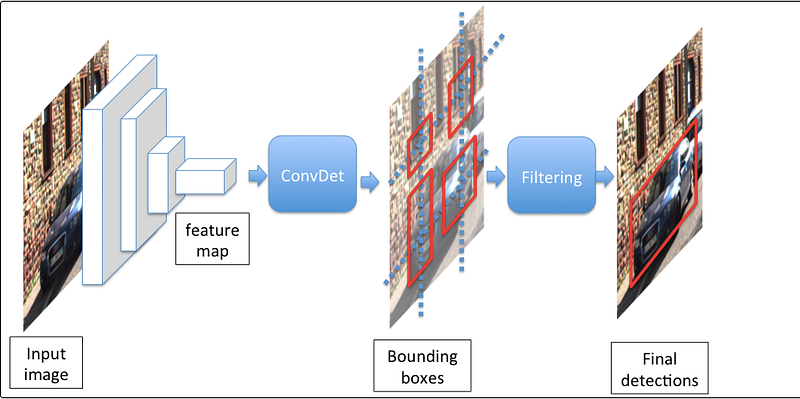

Ding Zhao and Huei Peng, from Michigan, claim to have found a way to reduce by 99.9% the billion-plus miles necessary. The four keys are:

- Naturalistic Field Operational Tests

- Test Matrix

- Worst Case Scenario

- Monte Carlo Simulation

The paper is light on details, but the approach seems to boil down to: drive dangerous situations again and again on a test track, instead of waiting for the dangerous situations to occur on the road, because that could take forever.

And that all seems smart enough. It’s like practicing three point shots, instead of just mid-range jumpers. Or building new exciting software projects as a way to learn a new computer language, instead of just maintaining legacy code.

But it’s less clear how to get from essentially “focused practice” to “the car is safe enough”. Perhaps another paper is forthcoming that makes that leap.