Yesterday Udacity announced that my colleague, Oliver Cameron, is spinning out his own autonomous vehicle company, Voyage.

Friends have texted to ask if that means I’m now part of Voyage, and the answer is no.

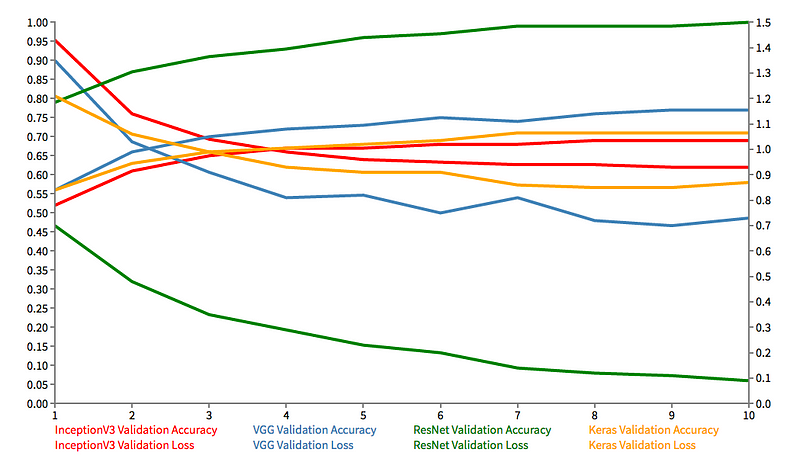

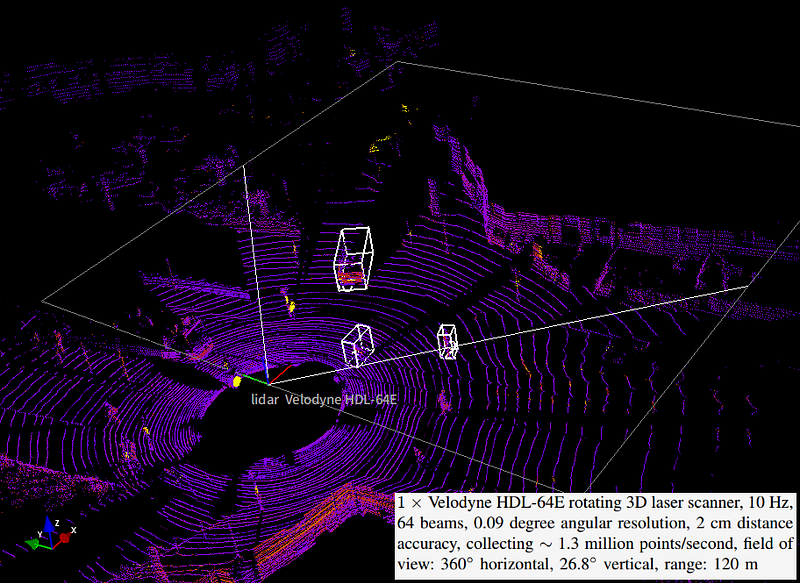

I’m staying at Udacity to build the Self-Driving Car Engineer Nanodegree Program, which has thousands of students and is a lot of fun. We’ve launched modules on Deep Learning, Computer Vision, Sensor Fusion, and Localization, with development underway on Control, Path Planning, System Integration, plus several elective modules.

If you’re reading this, you really should sign up for the program 😉

Oliver recruited me to Udacity, gave me lots of room to run, and has been a driving force in building the company for the last three years. While I wish him the best, it’s sad to see him go.

But Voyage is its own independent company, so this won’t affect Udacity’s mission to place our students in jobs with our many amazing hiring partners, like Didi, Mercedes-Benz, NVIDIA, Uber ATG, and many more.