Meet some of the people behind the self-driving car revolution!

The Autonomous Vehicle field is full of amazing personalities — people who possess remarkable technical skills and rarefied knowledge, but who are also supremely creative, and incredibly passionate.

The team from Mercedes-Benz is a perfect example.

They’re one of our core partners for our Self-Driving Car Engineer Nanodegree program, and they’ve done a remarkable job of not just teaching our students technical skills, but giving them with a real sense of purpose and vision. Perhaps most importantly, they have helped to make complex autonomous vehicle concepts accessible to every single student we teach.

I feel fortunate to work with such great people, and I’d like introduce you to some of them right now! Specifically, Axel, Michael, Dominik, Andrei, Maximillian, Tiffany, Tobi, Mahni, Beni, and Emmanuel!

First, meet Axel. In this video, he shares the history of Mercedes-Benz and autonomous vehicle research, and also describes the type of engineers they are hiring today:

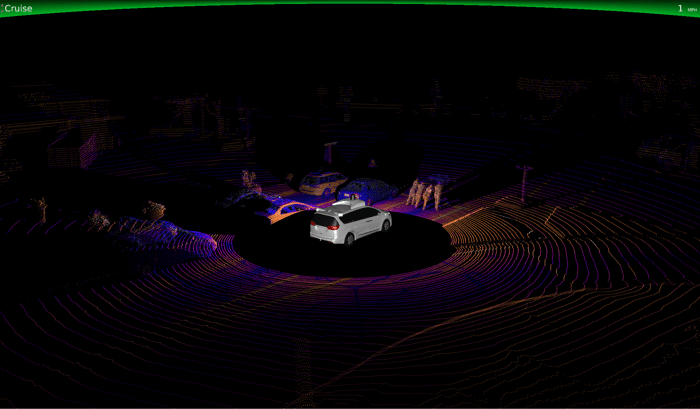

In this next video, Dominik, Michael, and Andrei outline the tools the Mercedes-Benz sensor fusion team uses to combine sensor data for tracking objects in the environment:

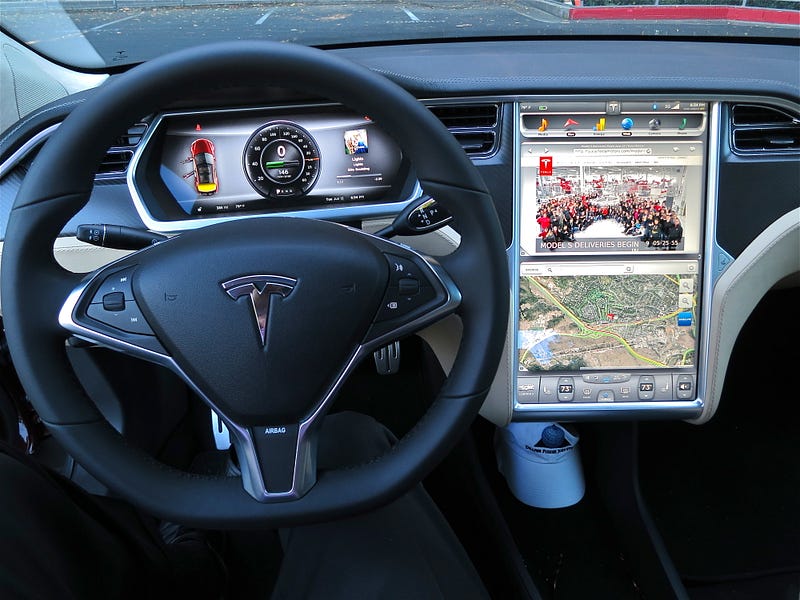

Next, Maximillian and Tiffany talk about the work they do on the localization team to help the vehicle determine where it is in the world:

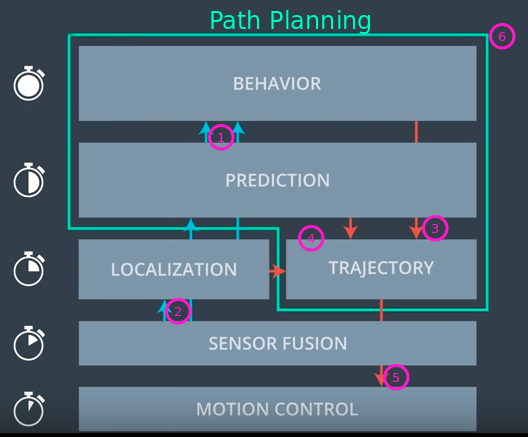

Finally, in this video, Tobi, Mahni, Beni, and Emmanuel outline the three phases of path planning. First, the prediction team estimates what the environment will look like in the future. Then, the behavior planning team decides which maneuver to take next, based on those predictions. Lastly, the trajectory generation team builds a safe and comfortable trajectory to execute that maneuver:

Amazing people, right? Are you ready to join them? Then you should apply to join them at Mercedes-Benz, because they’re hiring!

Not quite ready yet? Then apply now for our Self-Driving Car Engineer Nanodegree program! You’ll be joining the next generation of autonomous vehicle experts, and that’s a pretty amazing thing.