Here is a terrific collection of blog posts from Udacity Self-Driving Car students.

They cover the waterfront — from debugging computer vision algorithms, to detecting radius of curvature on the road, to using Faster-RCNN, YOLO, and other cutting edge network architectures.

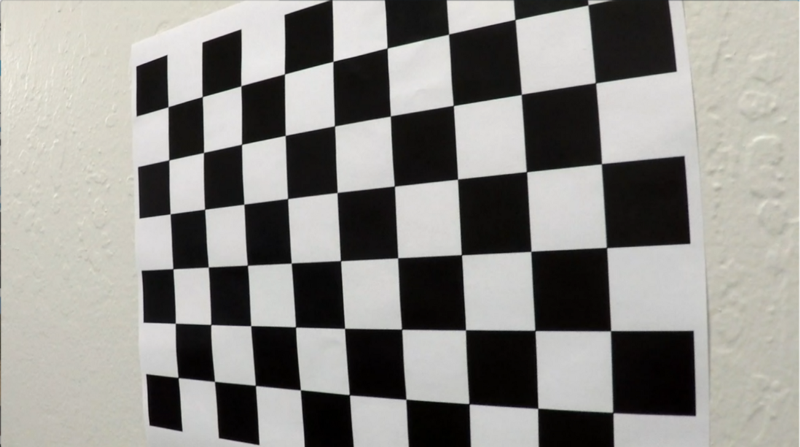

Bugger! Detecting Lane Lines

Jessica has a fun post analyzing some of the bugs she had to fix during her first project — Finding Lane Lines. Click through to see why the lines above are rotated 90 degrees!

Here I want to share what I did to investigate the bug. I printed the coordinates of the points my algorithm used to extrapolate the linens and plotted them separately. This was to check whether the problem was in the points or in the way the points were extrapolated into a line. E.g.: did I just throw away many of the useful points because they didn’t pass my test?

Towards a real-time vehicle detection: SSD multibox approach

Vivek has gone above and beyond the minimum requirements in almost every area of the Self-Driving Car program, including helping students on the forums and in our Slack community, and in terms of his project submissions. He really outdid himself with this post, which compares using several different cutting-edge neural network architectures for vehicle detection.

The final architecture, and the title of this post is called the Single Shot Multibox Detector (SSD). SSD addresses the low resolution issue in YOLO by making predictions based on feature maps taken at different stages of the convolutional network, it is as accurate and in some cases more accurate than the state-of-the-art faster-RCNN. As the layers closer to the image have higher resolution. To keep the number of bounding boxes manageable an atrous convolutional layer was proposed. Atrous convolutional layers are inspired by “algorithme a trous” in wavelet signal processing, where blank filters are applied to subsample the data for faster calculations.

CNN Model Comparison in Udacity’s Driving Simulator

This is a fantastic post by Chris comparing and contrasting the performance of two different CNN architectures for end-to-end driving. Chris looked at an average-sized CNN architecture proposed by NVIDIA, and a huge, VGG-style architecture he built himself.

I experimented with various data pre-processing techniques, 7 different data augmentation methods and varied the dropout of each of the two models that I tested. In the end I found that while the VGG style model drove slightly smoother, it took more hyperparameter tuning to get there. NVIDIA’s architecture did a better job generalizing to the test Track (Track2) with less effort.

Hello Lane Lines

Josh Pierro took his lane-finding algorithm for a spin in his 1986 Mercedes-Benz!

From the moment I started project 1 (p1 — finding lane lines on the road) all I wanted to do was hook up a web cam and pump a live stream through my pipeline as I was driving down the road.

So, I gave it a shot and it was actually quite easy. With a PyCharm port of P1, cv2 (open cv) and a cheap web cam I was able to pipe a live stream through my model!

Udacity SDCND : Advanced Lane Finding Using OpenCV

Paul does a great job laying out his computer vision pipeline for detecting lane lines on a curving road. He even compares his findings for radius of curvature to US Department of Transportation standards!

Overall, my pipeline looks like the following:

Let’s look at each stage in some detail