Data is the key to deep learning, and machine learning generally.

In fact, Stanford professor and machine learning guru (and Coursera founder, and Baidu Chief Scientist, and…) Andrew Ng says that it’s not the engineer with the best machine learning model that wins, rather it’s whoever has the most data.

One way to get a lot of data is to painstakingly collect a lot of it. All else equal, this is the best way to compile a huge machine learning dataset.

But all else is rarely equal, and compiling a big dataset is often prohibitively expensive.

Enter data augmentation.

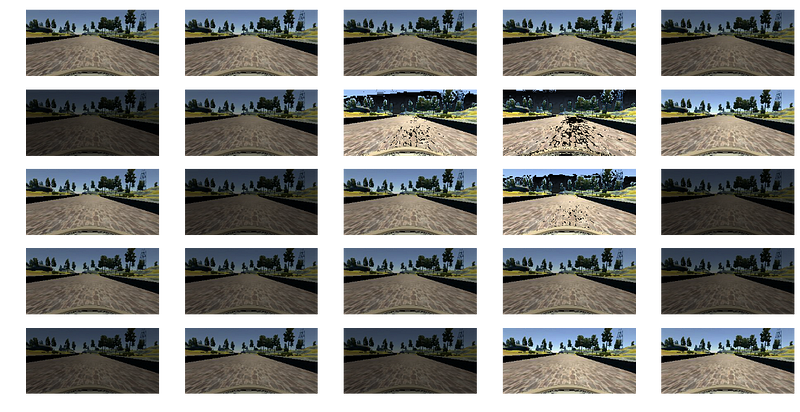

The idea behind data augmentation (or image augmentation, when the data consists of images) is that an engineer can start with a relatively small data set, make lots of copies, and then perform interesting transformations on those copies. The end result will be a really large dataset.

One of the Udacity Self-Driving Car Engineer Nanodegree Program students, Vivek Yadav, has a terrific tutorial on how he used image augmentation to train his network for the Behavioral Cloning Project.

1. Augmentation:

A. Brightness Augmentation

B. Perspective Augmentation

C. Horizontal and Vertical Augmentation

D. Shadow Augmentation

E. Flipping

2. Preprocessing

3. Sub-sampling